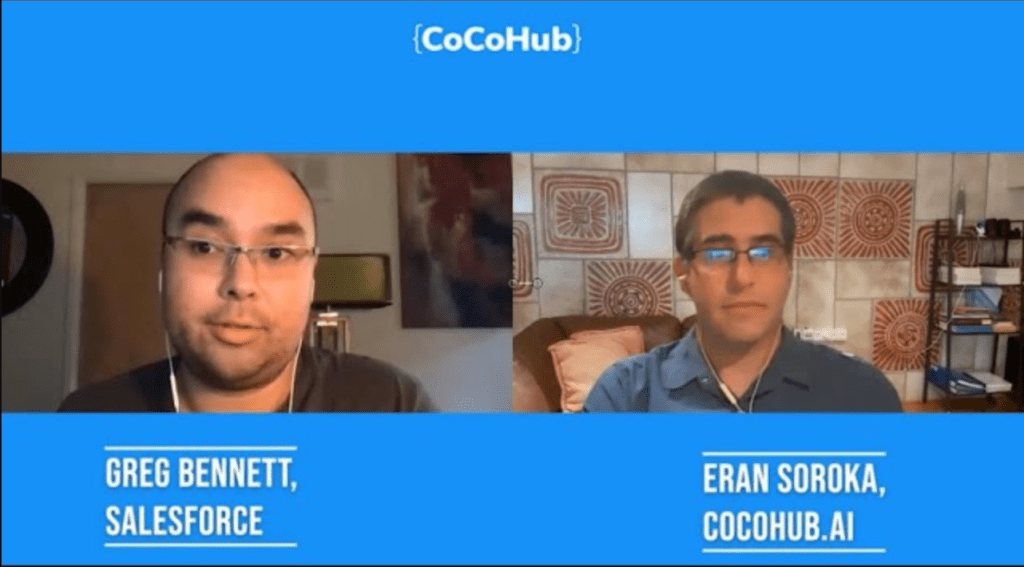

Sometimes, the most random turn of life can take you to the greatest places. For Greg Bennett, conversation design lead at Salesforce in San Francisco, it was a memorable end to a relationship, that drove him to become a world-leading expert on the connection between linguistics, with MA and BA from Georgetown University. In the 6th episode of Taking Turns, Bennett took the time to dive into the deepest details of conversation design. It’s longer than usual – but it’s worth your time.

HOW DID YOU GET INTO CONVERSATION DESIGN? AND HOW IT IS RELATED TO AN UNFORTUNATE ROMANTIC RELATIONSHIP?

You already know the story and I’m happy to tell it. So I started out actually in the field of linguistics. My background academically is in linguistics for both my undergraduate and graduate work. And I started really delving into a specific piece of linguistics, called discourse analysis. It focuses very heavily on analyzing language in human interaction in everyday use.

I focused on that particular part of linguistics because I got dumped over IM chat as sophomore in college. And I the reason why I was really sort of fixated on it was first of all – when you’re 19, and it’s your first major heartbreak, you’re looking for answers. So as a researcher by training and someone who’s very curious and analytical by nature, I wanted to figure out something. How was this that maybe a few days before the actual breakup, I could feel it coming, even though this other person and I, we were talking over chat? I could feel him being colder to me and I couldn’t see his face, I couldn’t hear his voice?

That’s when I really started analyzing language in online and sort of synchronous interaction without your voice or your gestures. It really led me to understanding – ok, users will recruit from every possible resource they have in an online space to try and convey what we call in linguistics

contextualization cues.

• WHAT ARE CONTEXTUALIZATION CUES?

Contextualization Cues are a phenomenon that was discovered by the sociolinguist John Gumperz. He was at UC Berkeley. And he essentially posited that contextualization cues are all of those extra layers of meaning that you give on top of the dictionary meaning of what you say. So you have a semantic layer which is the core meaning of what you the meaning of the words themselves. Then you have intonation, facial gestures, what my eyebrows are doing, hand gestures, eye gaze. I gave all of those things, even pausing, all of that.

I wanted to figure out – how was this that a few days before the actual breakup, I could feel it coming, even I couldn’t see his face, I couldn’t hear his voice?

GREG BENNETT

Those are all contextualization cues that essentially signal what another linguist, named Deborah Tannen, calls a Meta-message to the other person in the conversation to say here’s some extra meaning. Here’s how I’m sort of packaging up or or setting the framing around whatever it is that I’m saying.

What does “” mean?

So in an online space, you might see something like the playing with a textuality like you have CAPS which makes people think that the other person is yelling. If you send a message without usually using a ‘.’ at the end of your utterance, and then you use one. That means something. Or, if there’s italics or Emojis. All of those things at the extra layer of contextualization cues of what we’re saying.

And so, to close the loop here – The reason why I felt the breakup coming was because my ex he started to pair back some contextualization cues. He started adding in the “.” marks. And he was using less emojis and emoticons, and that feels colder, because it’s essentially creating that distance.

HAVE YOU HEARD ANNA THE COMPANIONSHIP BOT’S NEW SINGLE? IT’S HERE!

• WHAT’S THE PROJECT YOU’RE MOST PROUD OF?

At Salesforce, we have the Einstein Bot Builder. I started out as a user researcher, and was doing conversation design as part of my role. It just wasn’t the primary focus of my role; now I’m primarily a conversation designer, where sometimes I research it.

The interesting piece about being a UX professional on a chatbot builder platform, is – I get to be rather than directly applying the linguistics for creating conversation designs that customers will experience directly. I get to be more of a teacher, guide, for anyone who’s building conversation design for themselves. And so I think the thing that I’m probably the proudest of on the Einstein bot Builder. It articulates our viewpoint on conversation design and democratizing linguistics for people who aren’t necessarily as familiar with it.

Personally, I’m proud that I was part of an effort to remove the term misspelling from the bot builder. When you’re training a bot, particularly for NLU, when it comes to intents, you have to train it with different phrases and different utterances. The reason is to be able to essentially understand the customer, the user. One thing in the bot builder when I got on the team was the idea of normalizing your data. This is something that a data scientist would be familiar with the idea that you have to get rid of those sort of outlier spelling variations.

💬 Previously on Taking Turns

Michelle Zhou: “Humans talking to machines are brutally honest”

Mary Tomasso: “Don’t just write a conversation – speak it”

Michelle Parayil: “Bad copy can ruin a customer’s day”

Henry Ginsburg: “Want to get in? Grab a pen and start writing”

Kent Morita: “In the right context, humor can be very effective”

And that framing got us misspelling as a way to kind of colloquially tell people like ‘hey, clean your data’. I sort of took umbrage with that in a sense that when you have the term ‘misspelling’, the presumption order or the presupposition there is that there’s a right and a wrong way to spell something in language. And because we’re talking about conversation, there’s so much of your social identity that you were very effectively encode in what you say and how you say it, especially in types chat that, to get rid of what we would consider a misspelling.

I used to say that however, whatever spelling variation that the user is using it wrong, because it’s not considered standard, and then erasing expression of their identity by then taking it out of the dataset.

And I brought this up with my fellow UX designer Molly Mahar. Then, she and I went to our product folks and content experience folks and stakeholders, and said – hey, I think we should take the term ‘misspelling’ out of the bot builder. We don’t want to make a judgment call on how people are using language. We want to encourage the robust training of the dataset. So that way, if I’m spelling “Yass” instead of yes, then try and exude this a part of my identity than I want to make sure that the bot understands that.

• AND IS THE BOT TRYING TO “FIT” ITSELF INTO THIS CONVERSATION?

…Or just be able to understand it. For example, if the bot said ‘Did I take care of everything for you?’, and I typed back, ‘YASS!’. So you’re super excited about how good of a job the bot did – and I’m trying to encode and express the intersection of my day and PLC (Programmable Logic Controllers) identity in language, then YASS is the word to use. And the worst response for the bot would be ‘I’m sorry, I don’t understand’.

So it’s essentially about trying to train the bot to be a better listener. So that way I can understand it – the same way that you’d for a voice product. You’d train it on different accents and pronunciations, all of those different characterizations come out in spelling. In a text-based bot, so you want to train it on those variations as well.

• WHAT’S THE ONE THING EVERY GOOD CHATBOT/VOICE ASSISTANT MUST HAVE?

I’m very biased here: the conversation design. Whether it’s chat or voice and, I really think about conversational experiences across the spectrum; On one you have a text-forward conversation, on the other hand you have voice-centric conversation, where voice-forward conversation is somewhere in the middle, and text-forward conversation – the core of experience, really is around the text itself. There might be graphics, menus and images of carousels that you can essentially leverage to enhance the conversational experience. But fundamentally, at its core, it’s about its text.

And then voice-centric conversation, it’s similar in that you might be having it on a computer or on a phone. And it might have some graphical elements where it’s transcribing what the user or the system is saying and have some other kind of cues, but that it’s very much about the voice. Then on the farthest and you have voice-centric conversation, where it might be something like I’m, Alexa or Google home, physical device, whereby there are very few and limited visual cues, and it’s pretty much solely on the voice that has the experience and I think that given that the common thread across all of those is that conversation is now the UX.

Stop the messages onslaught

It’s not necessarily the graphical design. It’s a conversation design that is tearing the entire experience. And so if it can’t understand YASS, or if it has a very short response delay from the bot… So all the instructions that come from a chatbot come with no pauses, just an onslaught of messages. Or, if it’s a voice assistant but you have designed it as a text bot – so it reads out a menu of all these things that it can do.

All of those things aren’t necessarily the best sort of experience. So, it’s going to impede the user’s trust in the system to get the usability factor across actually accomplish a task. And in that regard – and Salesforce are number one – in order to enable and encourage trust with users, it’s very important to get the conversation design precise and to get it strategic. Because if not, then you lack consistency, and the usability starts to fail.

- CoCo & Co Content reCommendations

- How can Conversational AI help in the fight against the Coronavirus?

- How can the travel industry recover with the help of conversational AI?

- The Feedback Loop: A basic chatbot development process

- How to avoid privacy risks with Conversational AI?

• TELL US ABOUT AN AWKWARD, AMAZING OR SURPRISING THING THAT HAPPENED TO YOU AS A CONVERSATION DESIGNER.

I’m very much a nerd at heart. I grew up watching Godzilla movies and playing Pokemon games and this stuff. I don’t call myself a gamer, I don’t play that much, but I’m a sucker for a good Easter egg. And sometime I’m doing some kind of like design conversation design vision for products. Now in my conversation design role at Salesforce, I like to drop little Easter eggs in there. For example, if I’m doing some POC for something or a vision prototype, maybe the name of one of the participants, is a reference to some sort of media things.

So very recently I did a vision demo for a voice product that we’re working on. It’s very important for me to convey the systems processing sound, or that the system is essentially taking time to accomplish a task. And it’s a tough balance to strike. At least in American English conversation, a pause that’s longer than about a second, is essentially a predictor/signifier. Anita Pomerantz discovered this in 1984, that it’s sort of like a precursor for a negative response. It indicates that may be some troubles are coming in the conversation.

The machine can’t type

And so we want to make sure that for a voice interaction, if it’s taking longer than a second for a pause, we fill it somehow with something. So I saw a thread on Twitter recently that said, if you’re calling into one of these more natural IVRs where you can just speak aloud rather than you know, pressing 1 to advance throughout a menu, that the user find it disingenuous if it sounded like the machine was typing because it’s not human.

And so I thought – okay I’m going to do a demo or I’m going to do a vision design. Since I wanted to fill the pause with something, so I decided to put in a little Easter Egg. If you’re familiar with playing the original Pokemon games from the 90s, when you go to the Pokemon Center and you want to heal your Pokemon, it’s like that – ‘Click click click click click click’ the Pokemon the Pokeball falls into the slots and it’s like ‘Tin-Tin-Tinanan’.

So when it’s healing the Pokemon, I used that a bit tone to essentially give that like, okay. I’m processing something for you and I didn’t tell anybody else like I’m just going to let’s see how it works, and it’s just for now. It’s just for the sake of a of a placeholder. I like to have fun with conversation design. I like to draw a lot from my experiences with pop culture. And I enjoy, in terms of Easter Eggs, to help make that a fun experience.

In American English, if you take a pause that’s longer than about a second – it indicates that maybe some troubles are coming

– GREG BENNETT

• WHAT TIPS CAN YOU GIVE TO ASPIRING CONVERSATIONAL DESIGNERS, OR TO PEOPLE WHO WANTS TO JOIN THIS PROFESSION?

First – start to mine the internet for communities that deal with voice and conversation and conversation design as a whole. There’s so many of them – Whether it’s CoCoHub or Robocopy or the Voice Lunch, or even the chatbot & voice Summit that’s based out of Tel Aviv. There so many different organizations right now that are producing content and bringing the conversation design community closer together.

In terms of learning, I think, I’m pretty good friends with Hans van Dam, CEO of Robocopy, their content is great. The vision for what he and his team were doing is really strong. And so I encourage folks to take a look at that material as well.

And if you’re into learning the linguistics around it – I think that probably my favorite sort of I call it “Pop Linguistics”. Which is like Linguistics for non-linguists, which is not too difficult to grasp.

My favorite go-to book is called “That’s not what I meant”, by Deborah Tannen. It’s a pretty quick read to Deborah Tannen. She writes really accessibly and so is I think anybody can really understand it and grasp that, relate to it. And it’s really covers the nuances of conversational style and how about it’s materializes linguistically. Also, it’s about how that can essentially guide relationships through conversation. And I think that’s fundamentally is really what were talking about achieving with voice and chat assistant.

• TELL US MORE ABOUT YOUR COMPANY.

I’m very very very privileged and grateful to work for Salesforce. It’s a company that has really great values and is very much invested in customer success. We think about the customer first, and our number one value is trust. And that really comes down to what are we doing, to make sure that we’re doing right by the customer. Not just because it’s the right thing to do, but also because trust with our company. In addition, trust with a customer also enables customer success at scale, and I think that, given everything that’s going on in the world right now, I’m very proud that Salesforce has taken a proactive stance to helping as many people as they can.

Whether it’s getting PPE, or thinking through safe mechanisms for returning to work, as well as thinking about its impact on the community around it. San Francisco, it’s the home of Salesforce and Salesforce is very conscious about the footprint it has on the city. And it’s making sure to take care of not just the city itself, but also its people and people around the world. It’s very much a culture of giving back through Salesforce.

Holistically through its offering in CRM. I think that’s Salesforce – the thing that I find really inspiring about it is that it’s about taking this technology that seems analytical, and making it come alive for people so that we can change their lives and really help the world to make it a better place.

Next week, we’ll have Chapter #7 of Taking Turns! In the meanwhile – subscribe to our YouTube channel | Join our Discord community | Sign up for our newsletter | Follow us on Facebook, LinkedIn, Instagram or Twitter